Amazon Audiences Are Coming To Netflix: What Changes for Targeting and Measurement

.webp)

Amazon Ads says buyers will be able to use Amazon Audiences for Netflix campaigns via Amazon DSP starting next quarter in the US. The practical change is not only new audience options, but also higher expectations for proving incrementality and managing frequency, recency, and reporting consistency in premium CTV. Marketers should baseline Netflix performance first, then test the audience layer with controlled variables so results are interpretable. Before scaling, align measurement definitions, attribution windows, and deduping assumptions across reporting sources.

As new audience signals enter premium CTV, the real work is proving what caused the lift.

Key takeaways

- Treat Amazon-Audiences-on-Netflix as a measurement change, not just a targeting add-on.

- Baseline Netflix performance first, then test the audience layer with controlled variables.

- Use a structured creative test matrix so “better targeting” does not mask weaker messaging.

- Align measurement hygiene upfront: windows, dedupe assumptions, and source of truth before budget expansion.

What changed: Amazon Audiences can be activated on Netflix via Amazon DSP

Amazon Ads says buyers can use Amazon Audiences for Netflix campaigns through Amazon DSP. Practically, that means audience activation that is typically associated with commerce signals can be applied to Netflix ad buys executed in the DSP workflow.

Timing and availability matter for planning. As reported, the capability is expected to start next quarter in the US, so teams should treat the near term as preparation time for test design, creative readiness, and measurement QA.

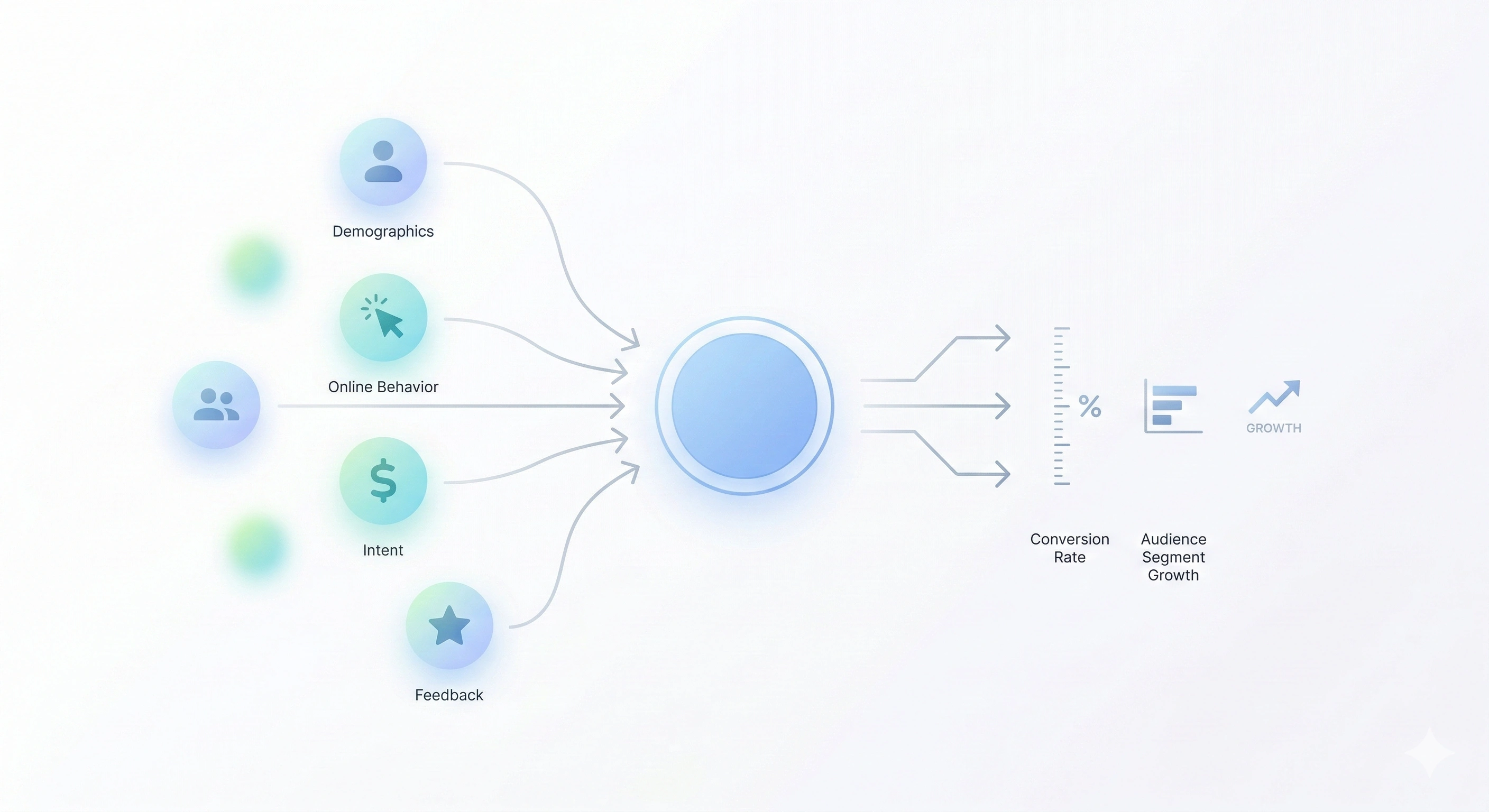

At a high level, “Amazon Audiences” implies the ability to use purchase and interest signals for audience selection. Even if the audience layer is the headline, the bigger operational question is how you will prove the audience layer drove incremental outcomes versus simply changing who saw the ads or how often they were exposed.

Why it matters: retail and commerce audiences move deeper into premium CTV

When retail and commerce audiences move deeper into premium CTV, scrutiny increases. Stakeholders tend to expect more than platform-level delivery improvements. They expect outcome measurement, clearer incrementality logic, and a defensible explanation for why results changed.

There are also operational implications that show up quickly in CTV planning:

- Audience strategy: Decide whether your goal is broad prospecting, category conquesting, or retaining existing customers, because each implies different success metrics and acceptable reach and frequency patterns.

- Frequency and recency: Commerce-informed audiences can be tempting to target narrowly. Narrow targeting can concentrate frequency, which can inflate short-term response signals while hurting true incremental lift.

- Incremental reach questions: You will likely be asked whether the new audience-enabled Netflix buys add reach versus simply shifting impressions among the same people already reached by other CTV and digital buys.

The biggest risk is attribution error in decision-making. If performance improves, it is easy to credit “better targeting,” when the real driver might be creative differences, supply differences, or measurement differences across reporting sources. A disciplined test plan reduces the chance of scaling a false win.

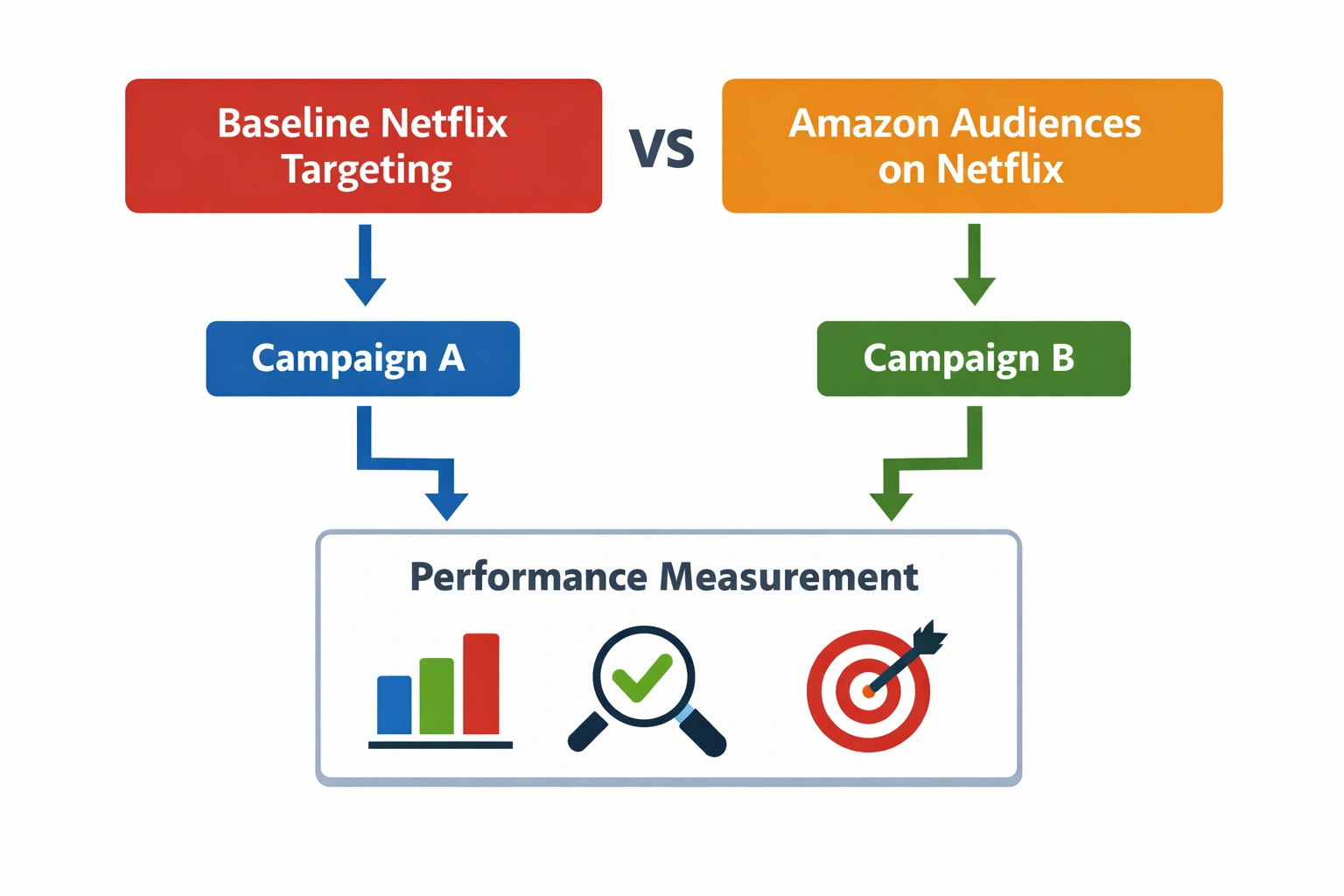

A clean testing plan: baseline Netflix buys vs Amazon-Audiences-on-Netflix

Hold budgets, flight dates, and creative constant so the audience layer is the main variable.

Start by defining the job-to-be-done. Without that, you will select metrics that cannot answer the question you actually care about.

- Prospecting: Prioritize incremental reach, qualified site visits, or other upper-funnel indicators aligned to new customer growth.

- Conquesting: Prioritize evidence that you reached and influenced people who were likely to choose alternatives.

- Retention: Prioritize outcomes tied to repeat behavior and appropriate recency controls, while being careful not to “measure” what would have happened anyway.

Next, set a baseline Netflix line item that is intentionally simple. The goal is not to optimize immediately. The goal is to create a stable comparison point.

- Use matched budgets so one line does not win by brute force.

- Use matched flight dates so seasonality and promotions do not contaminate the comparison.

- Use matched creative where possible so you are not comparing different messages, offers, or production quality.

Then add the experimental line item with Amazon Audiences applied. Pre-define success metrics and guardrails before launch so you do not “move the goalposts” after results appear. Guardrails can include acceptable frequency thresholds, minimum reach, and a plan for what you will do if delivery concentrates too narrowly.

Keep the test readable. If you change too many variables at once, you will not know whether the audience layer helped, the creative helped, or the allocation and pacing logic changed delivery patterns.

Separate targeting lift from creative lift with a structured creative test matrix

Test multiple messages against the same audience setup to isolate creative effects from targeting effects.

If you only change targeting, you might miss that creative is mismatched to the audience. If you only change creative, you might misread the power of the audience. A simple creative test matrix helps isolate whether the message works for the targeted group.

Consider running 2–3 message variants against the same audience setup. The goal is not to win an awards show. The goal is to validate message match and reduce the chance that one creative happens to align with an audience while another does not.

- Variant A: A clear, general value proposition.

- Variant B: A more specific value proposition oriented to the category need.

- Variant C (optional): A differentiator-led message that tests whether the audience responds to a distinct reason to choose you.

Keep landing and offer consistency to reduce confounds. If one creative sends people to a different landing experience or uses a different offer, you are testing a different funnel, not just a different message.

Also validate product availability and relevance to the targeted audience. If the audience is defined by interest or purchase intent signals, but the product is unavailable, out of stock, or not relevant in key areas, the test can produce misleading results. The outcome might look like “the audience didn’t work,” when the real issue was fulfillment or mismatch between message and what people can actually buy.

Measurement hygiene and QA before scaling

The more systems involved, the more likely reporting differences will create false confidence or false alarms. Before scaling, treat measurement hygiene as a launch requirement, not a postmortem task.

First, align attribution windows and reporting definitions before launch. Decide what window you will use for evaluating outcomes and ensure stakeholders understand what is included and excluded. If one report uses a different window than another, comparisons become unreliable.

Second, confirm deduping assumptions and how incremental reach is evaluated across buys. If you are running multiple CTV or video buys, “reach” can be overstated if deduplication is not consistent or if the assumption set differs by source. Make sure you know what is deduped, what is not, and how that impacts incremental reach claims.

Third, decide the source of truth and reconcile discrepancies between DSP and publisher reporting. In practice, different systems can count impressions, reach, and other metrics differently. Agree in advance which report will be used for which decision:

- Optimization decisions: Which source will guide pacing and in-flight adjustments?

- Performance evaluation: Which definitions will be used to declare a test winner?

- Executive reporting: Which numbers will be presented as the final view, and what caveats will be documented?

Only after these checks are done should you consider meaningful budget expansion. Scaling before measurement alignment can lock in a strategy that looks good on paper but cannot be validated with consistent definitions.

Sources

Frequently asked questions

What does it mean that Amazon Audiences are coming to Netflix?

Amazon Ads says buyers will be able to activate Amazon Audiences for Netflix campaigns via Amazon DSP starting next quarter in the US. In practice, it means audience targeting associated with Amazon Audiences can be applied when buying Netflix ads through the DSP.

How do you test Amazon Audiences on Netflix without biasing results?

Create a baseline Netflix line item and an experimental line item with Amazon Audiences applied, while keeping budgets, flight dates, and creative matched as closely as possible. Pre-define success metrics and guardrails before launch so you do not interpret noise as lift.

How can marketers separate audience targeting lift from creative lift in CTV?

Use a simple creative test matrix: run 2–3 message variants while holding the audience setup constant, and keep landing experience and offer consistent. This helps you see whether performance changes are driven by who you reached (targeting) or what you said (creative).

What measurement and reporting checks should be done before scaling Amazon DSP Netflix campaigns?

Align attribution windows and reporting definitions ahead of launch, confirm deduping assumptions for reach and incremental reach across buys, and decide a source of truth for reporting. Reconcile expected discrepancies between DSP and publisher reporting before using results to scale budgets.

.webp)

.webp)

.webp)

.webp)

.webp)