Audio-Only Digital Advertising: A Measurement and Testing Framework (Using Men’s Wearhouse as the Example)

.webp)

Audio-only digital campaigns can be effective for growing audience, but they are easy to mis-measure if you default to last-click expectations. Without visuals, the creative has to do more work, so clarity, brand identification, and offer recall should be validated before scaling spend. A practical framework is to define a clear hypothesis (such as incremental audience growth), run pre-launch audio QA, design controlled test cells to isolate learning, and monitor incremental reach plus early resonance signals on a consistent reporting cadence. Men’s Wearhouse provides an example of an audience-growth outcome from a campaign run solely on digital audio.

A practical framework for testing and measuring audio-only campaigns beyond last-click.

Key takeaways

- Treat audio creative as a testable asset: script clarity, brand identification, CTA phrasing, length, and rotation are controllable variables.

- Define success metrics that fit audio’s job (for example, incremental reach and audience resonance), rather than defaulting to last-click.

- Use a validation ladder: pre-launch audio QA, controlled test cells, early resonance checks, then scale with incremental reporting.

- Build early-warning reporting to detect audience mismatch or weak recall before expanding spend.

What an audio-only campaign changes (and why marketers mis-measure it)

In an audio-only campaign, there is no visual reinforcement. The listener cannot “see” a logo, product shot, headline, or offer card, so message clarity and distinctiveness have to come through in the spoken words and structure. If the ad is confusing or the brand is not clearly identified, you can reach people without building usable memory.

Audio is also easy to mis-measure when reporting defaults to last-click or immediate site actions. Many listeners are not in a position to click, and even when they do respond later, attribution may not be captured by click-based models. If you only look for immediate clicks, you can mistakenly conclude the campaign “didn’t work,” even when it contributed to audience growth or brand outcomes.

To avoid that trap, align evaluation to the campaign hypothesis. If the intent is incremental audience growth, then measurement should emphasize who you reached that you would not have reached otherwise, and whether they show early signs of resonance and recall. As one example of audio being used as a standalone channel, Men’s Wearhouse ran a campaign solely on digital audio and used it to grow its audience.

Start with a clear hypothesis and success definition (audience growth vs. response)

Start by stating the primary objective in one sentence. For an audio-only campaign, that could be: “Drive incremental audience growth and create downstream impact.” That phrasing matters because it sets expectations for what you will measure and how you will interpret early results.

Then define what “incremental audience growth” means in your reporting. At a principle level, you are looking for evidence that the campaign is expanding the pool of people you can reach, not merely redistributing exposure among people you already reach through other channels.

Add secondary signals that act as resonance proxies and audience fit checks. These do not need to be vendor-specific to be useful. Examples of principle-level checks include:

- Early recall indicators: do exposed listeners recognize the brand and main message?

- Message comprehension: can they repeat the offer and what action to take?

- Audience fit: are you reaching the intended audience characteristics in aggregate?

Finally, pre-define guardrails so reporting does not drift back to last-click. Document what would count as “on track” for an audience-growth hypothesis (for example, incremental reach plus stable or improving resonance checks), and what would count as “needs changes” (for example, weak brand identification or poor offer comprehension even when delivery is strong). These guardrails make it easier to keep stakeholders aligned during weekly updates.

Pre-launch QA for audio creative: remove ambiguity before spend

QA the script first, then plan intentional variants before spending budget.

Because audio-only removes visuals, pre-launch QA should focus on eliminating ambiguity in the script and read. Treat this as creative quality assurance, not just “approval.” A simple checklist can prevent wasting budget on an ad that is delivered but not understood.

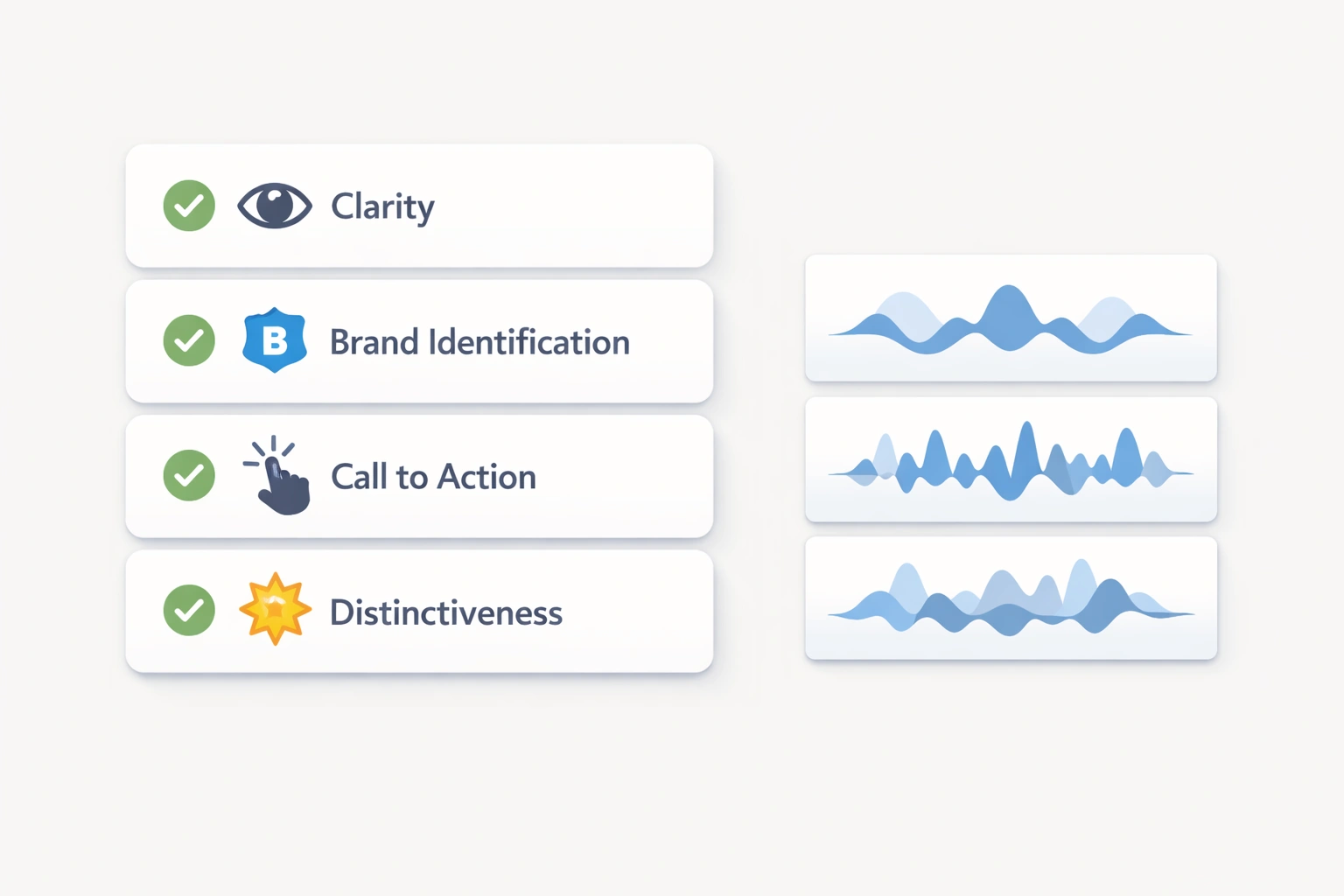

Script and read QA checklist (principles-based):

- Clarity: Is the message understandable on first listen, at normal playback speed?

- Brand identification: Is the brand name said clearly and early enough that listeners know who is speaking?

- Offer and CTA comprehension: Is the offer (if any) unambiguous, and is the CTA specific and easy to remember?

- Distinctiveness: Does the opening line or premise help the ad stand out from other audio spots?

Next, build a variant plan so you can learn quickly. In audio, small wording changes can materially change comprehension and recall, so plan variants intentionally rather than improvising mid-flight. Common variables you can test without changing the product or landing experience include:

- CTA or offer phrasing (for example, different verbs or simpler redemption instructions)

- Message order (lead with the problem, the benefit, or the brand)

- Length (shorter vs. longer, keeping the core message consistent)

Finally, define a rotation and frequency plan. Audio ads can wear out, and tolerance can drop when the same spot repeats too often. You do not need a complicated model to start. Set expectations for how many variants will run, how they will rotate, and what signals will trigger a refresh. This reduces the risk that performance changes are incorrectly attributed to targeting when the real issue is creative fatigue.

Design test cells that isolate learning (creative and targeting)

Separate creative and audience variables so results explain what changed.

To learn efficiently, design controlled test cells that isolate the variables you want to understand. The goal is not to test everything at once, but to separate creative questions from targeting questions so you can identify what is driving outcomes.

A practical approach is to create cells by:

- Creative variant: different scripts, CTAs, message order, or lengths

- Audience or targeting approach: different audience definitions or contextual approaches that reflect your strategy

Sequencing matters. Start with a small test, run a resonance check, then expand. This “test then scale” approach keeps your learning clean and prevents premature budget expansion into a cell that is delivering impressions but not generating comprehension or recall.

Define decision rules before launch so optimization is consistent and not reactive. Examples of decision rules at a principle level include:

- If reach delivery is strong but brand identification is weak, revise the opening and brand mention timing before scaling.

- If brand identification is strong but offer recall is weak, simplify the offer language or tighten the CTA.

- If recall is acceptable but signals suggest an audience mismatch, adjust the audience approach rather than rewriting the entire script.

These rules keep changes focused: fix the most likely bottleneck first (creative clarity vs. audience fit), retest, then move forward.

Reporting cadence: incremental reach, resonance, and early-warning signals

Audio-only campaigns benefit from a reporting cadence that separates delivery, incremental reach, and resonance. This helps you avoid false negatives caused by delayed response and avoids false positives caused by delivery volume that does not translate into understanding.

Incremental reach reporting should quantify how much new audience the campaign adds relative to what you would have reached without it. Frame this as a contribution to audience growth rather than a replacement for direct response metrics. If the campaign’s job is expansion, incremental reach is a primary lens.

Brand lift and resonance measurement can be planned without tying yourself to a specific vendor. At a principle level, you are looking for:

- Brand recognition or recall shifts among exposed vs. unexposed groups

- Message takeaway comprehension (what did they think the ad said?)

- Offer or CTA recall (can they repeat what to do next?)

Early-warning signals help you catch mismatch before you scale spend. Build a simple monitoring view that answers:

- Who was reached vs. who was intended (directional audience fit check)

- Whether expected early signals appear (recall and comprehension checks)

- Whether results change as frequency accumulates (a clue for wearout or tolerance issues)

Keep the cadence consistent. For example, review early resonance signals during the initial test window, make one focused change at a time, and only then broaden reach or budget. This matches the validation ladder: QA first, controlled testing second, resonance confirmation third, and scaling with incremental reporting last.

Sources

Frequently asked questions

How do you measure the success of an audio-only digital audio advertising campaign?

Measure success against the campaign hypothesis rather than defaulting to last-click. If the goal is audience growth, prioritize incremental reach and supporting resonance checks like brand identification, message comprehension, and CTA or offer recall. Use a structured approach: pre-launch creative QA, controlled test cells, early resonance validation, then scale with consistent incremental reporting.

What metrics make sense for podcast and streaming audio ads if last-click is unreliable?

Use metrics aligned to audio’s role: incremental reach (audience expansion) and resonance measures like brand recall, message takeaway comprehension, and offer or CTA recall among exposed vs. unexposed groups. Track early-warning signals that indicate audience mismatch or creative ambiguity so you can adjust before scaling.

How do you test audio ad creative (scripts, CTAs, and lengths) before scaling a campaign?

Start with pre-launch audio QA to confirm clarity, early brand identification, and offer or CTA comprehension. Then run controlled creative test cells that vary one dimension at a time, such as CTA phrasing, message order, or length. Review early resonance signals, apply pre-defined decision rules, and only scale the variants that demonstrate clear comprehension and recall.

How can marketers measure incremental reach and brand lift from digital audio ads?

For incremental reach, report how much unique audience the audio campaign adds relative to what would have been reached without it. For brand lift, compare exposed vs. unexposed groups on outcomes like brand recall and message comprehension. Use a steady reporting cadence so you can see whether lift and resonance improve, hold, or decline as frequency and rotation change.

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)